Polling 101: Chapter 3 - When can you trust the polls?

Hint: It's not whether you like or hate what they're saying

One of the things that goes along with election season is a proliferation of political polls, and with that; questions about how valid and reliable they are. Over the next few weeks, I’m going to address some of the frequently asked questions about political polls. You can read part one here, and part two here.

Here’s the third part.

But weren’t the polls proven wrong in recent elections?

This is a common question, and one that the answer is actually less straight forward than pollsters would like.

In the last few elections, the polls haven’t been particularly reliable at predicting the exact results. But they have given a fairly good picture generally. A bigger problem is that people have been bad at reading polls.

For example, in the 2016 US Presidential election, a lot of the polls had Clinton winning the popular vote. And so, a lot of people expected that the polls were saying that Clinton was going to win the election. However, the state-by-state polls were not nearly so one-sided.

Clinton did win the popular vote, and by quite a margin (almost 3 million votes). But the US Presidential election is not decided by who wins the most votes, but rather by who wins the most college seats. And on that front, the polls actually did quite well.

The various sites that compiled “poll of polls” state-by-state actually had the final result within the margin of error for 47 out of the 50 states. That’s 94% which is very close to the 95% that pollsters aim for.

The big issue was not that the pollsters were 1% out. The big issue was that the story most of the media were focused on was not the one that the polls were actually telling.

Sometimes the polls can tell the correct story, but the reporters aren’t noticing it.

But other times, the polls are consistently wrong

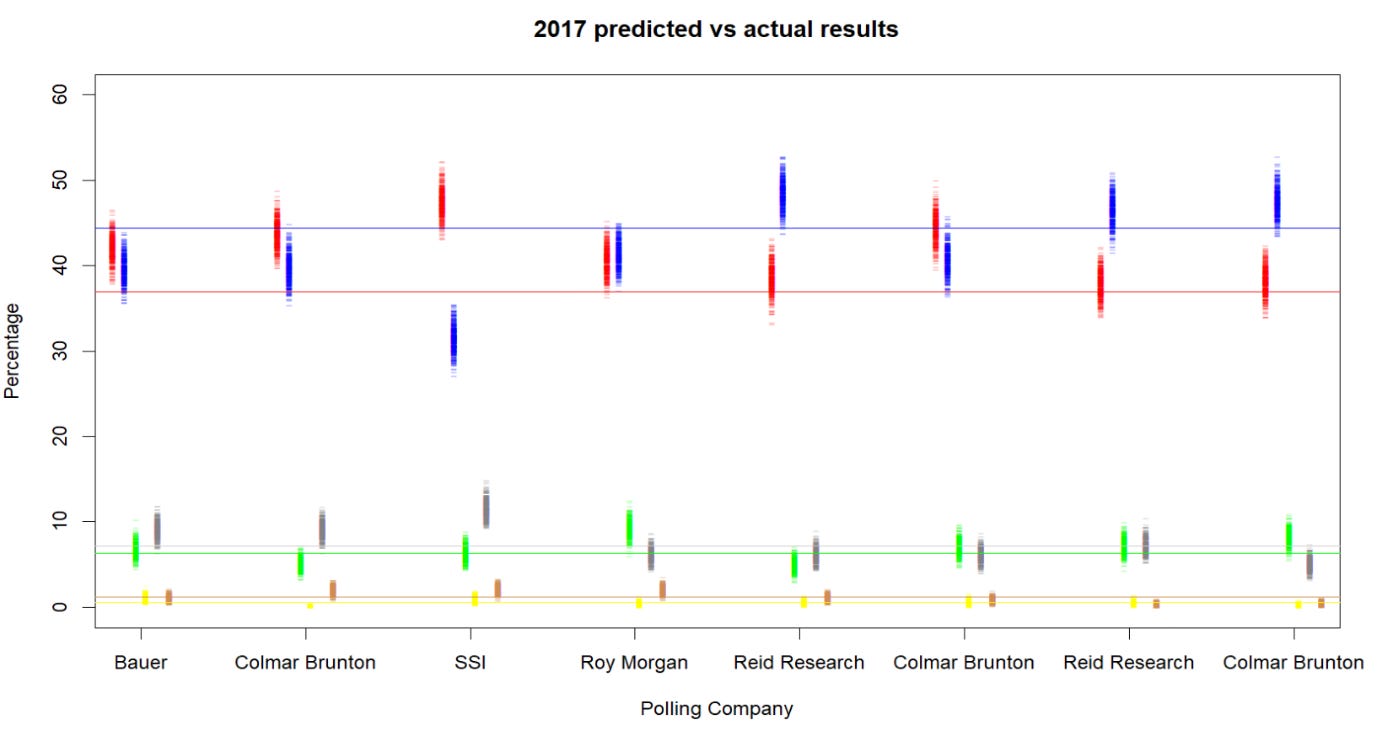

The New Zealand election in 2017 was a good example of that:

This graph shows the final 8 polls taken before the 2017 election. Each of the coloured blobs is what’s called a bootstrap of each poll result. This gives an idea of the range that would be thought likely for the election result, based on the poll. The horizontal lines are the actual election results.

Most of them had at least one of the parties so far wrong that they weren’t even in the edge of the range of expected outcomes.

5 of them significantly underestimated National, and 5 significantly overestimated Labour.

The level of error in those polls was about what we would expect from polls of 200 people, rather than polls of 1000 people.

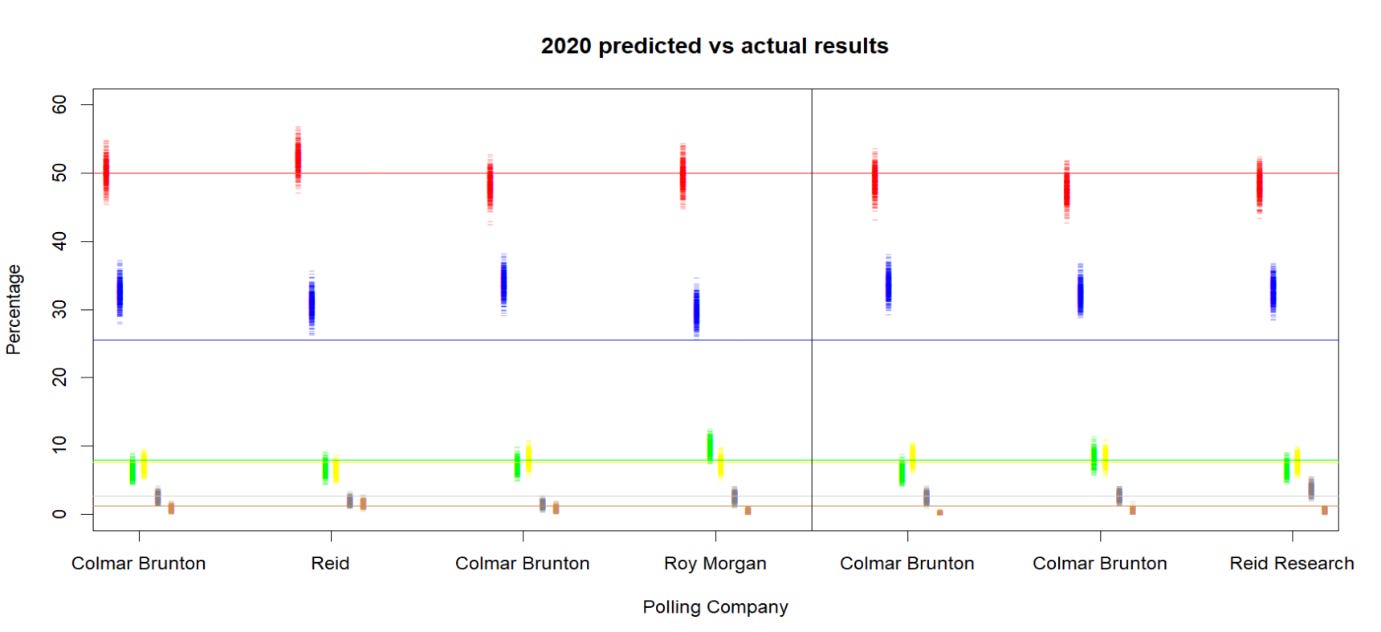

A similar thing happened last election, but in a different direction.

This time every single poll overstated National’s vote – even the last 3, run after early voting began.

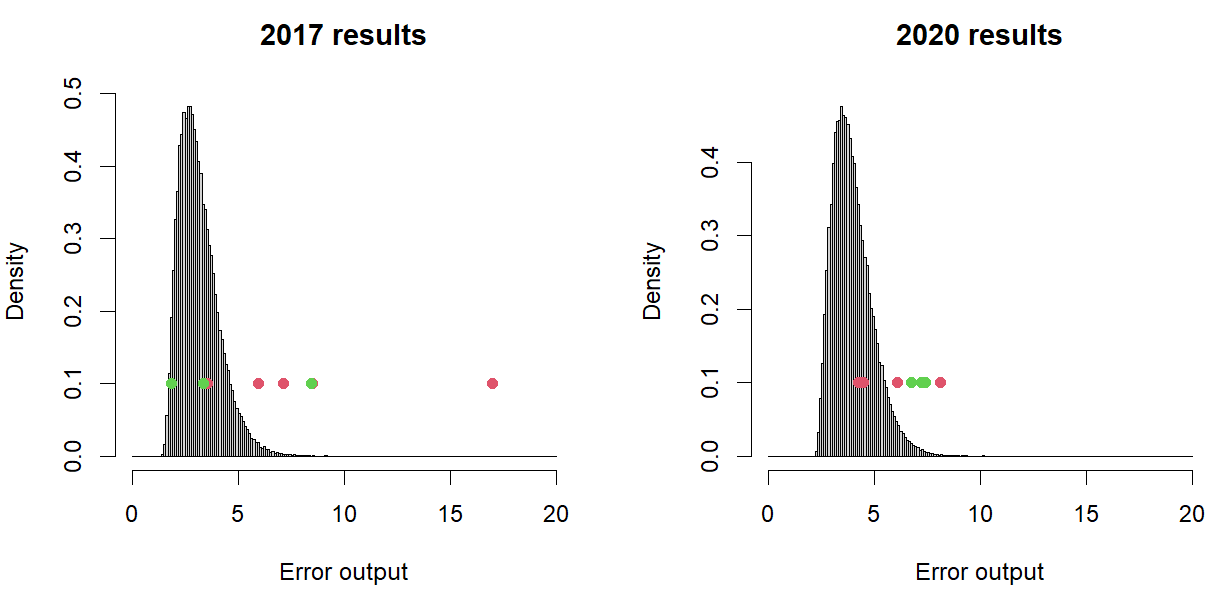

There’s a number of ways of measuring how well a poll did – one of them (the one that I quite like) is to find a distance from the expected to the actual outcomes with a multi-dimensional application of Pythagoras’ Theorem.

We don’t expect many (or any) polls to have 0 error, but we expect them to mostly be reasonably close.

Here’s the poll results compared to the expected:

The expected error output is the dark bars. The actual from the polls in the last few weeks are the dots. The green ones are the ones taken after early voting had started. You can see that in 2017 there were 3 polls that were in the reasonable range. These were the two Reid Research polls and the last Colmar Brunton one.

In 2020, there were 2 polls that were within the expected error range – Roy Morgan and the first Reid Research poll.

Two elections, and the pollsters generally covered themselves in ignominy.

So what went wrong?

Is it that the pollsters all got it wrong, or was there a logical explanation for it?

First it needs to be said that we’re calling the pollsters wrong for getting 4% or 5% away from the real result. We aren’t talking about being out by miles (excluding the 2017 SSI poll).

There can be a number of things that cause all (or almost all) the pollsters to get a result wrong. It can be that some demographics end up being less likely to vote. There are theories that rain on election day is bad for the left-wing parties, and warm weather on the election day is good for the incumbent.

But in 2017 it was generally fine and the left-wing parties did badly. And in 2020 there was showers in parts of the country, and it was quite cold, and yet the incumbent left wing party did spectacularly well.

So, it’s more than just weather

Some of it can be that the pollsters can only take a snapshot, but they miss a trend. In 2017 there was a trend of dropping support for Labour throughout the last 2 weeks or so of the campaign. National’s attacks on Labour’s credibility re: tax and spending did seem to start to hit home. If you drew the poll results on a time series graph and tried to draw a straight line through them for National and Labour, you’d end up close to where the actual results ended up.

For 2020, the factors were probably more complicated. There was a large increase in voter turnout, possibly due to the referendum questions attached to the election. The cannabis question may have motivated a number of Green voters who might not have turned out normally to vote. Greens got about 60,000 votes more than expected in that election. But taking 60,000 off the total still doesn’t account for the bad result that National achieved.

Another possible explanation is that National supporters just didn’t bother voting, knowing that the election was only going one way – against them. This is sometimes known as the self-fulfilling prophesy effect. Once polls start going against a party/person, their supporters become less inclined to go and vote.

But there’s another factor that also may have played a part. There was a huge number of special votes – over a quarter of the total votes cast. The election night data was much closer to the poll results. But the special votes went even more heavily in favour of Labour. Some of that was from New Zealanders living overseas who had seen coverage of Ardern on their news. Others of that was from new voters who decided to enrol at the last minute.

Both of those groups are very difficult to poll, and as a result were left out of the poll results. Normally that isn’t a major issue, as the special votes tend to be fairly similar to the ordinary votes. But that was certainly not the case in 2020.

What does that mean for this election?

Well, first of all, it means that we probably shouldn’t look at any one poll as being canonical. All the polling companies had bad polls in the lead up to the last two elections. And we also shouldn’t be too confident about making exact predictions based off the polls. Realistically, if we treat them as if they were polls of 500 people, then we’re more likely to get a reasonable estimate of the uncertainty.

However, that doesn't mean that we should discount them completely.

As with 2017, there is a trend in these numbers. The right-wing block of National, ACT and New Zealand First are (as a group) gaining ground. In May the median of their support added to 50.3%. In June it was 50.65%. It moved up again in July to 51.1%. August was 51.5%, and so far in September their combined medians is 52.0%.

That’s not a trend strong enough that you’d expect the election result to fall too far outside the confidence intervals, but it still suggests that the right-wing parties may out-perform the polling.

There isn’t a referendum in this election, so there’s less chance that we will have an elevated turn out due to that.

As far as special votes go, Chris Hipkins does not have the huge international profile that Jacinda Ardern had, and so there’s less likely to be a wave of votes coming his way from overseas. Current polling suggests that young people are less likely to support Labour than older people too, so the special votes are more likely to favour National and the Greens (although we need to be very carful about sub-group polls due to the tiny sample sizes and the potential for weighting based skew).

Any self-fulfilling prophesy effect will also likely go against Labour. It’s much easier to get motivated to vote for a party if you think that they will win. There is certainly still a pathway to victory for Labour, but it’s looking increasingly unlikely.

If the polls continue to be similar to what they’ve been, I’d suggest that the most likely outcome is that the combination of the trend effect and the self-fulfilling prophesy effect will result in National slightly out-performing the polls on election night. The special votes could dampen that slightly, but it is more likely to move in the direction of National or the Greens rather than Labour this time.